290,000 Americans have already died from COVID-19, yet people protest wearing masks and are skeptical of vaccinations. How does disinformation manipulate people into risky behavior? Who gains from spreading disinformation that might result in avoidable deaths? Why does YouTube spread disinformation?

Background

This blog is based on discussions with Dr. Nitin Agarwal at UA Little Rock and an expert in disinformation. His research is supported by U.S. National Science Foundation (NSF), Office of Naval Research (ONR), Army Research Office (ARO), Defense Advanced Research Projects Agency (DARPA), Air Force Research Laboratory (AFRL), and Department of Homeland Security (DHS). Nitin directs the Collaboratorium for Social Media and Online Behavioral Studies (COSMOS) which researches disinformation dissemination and participates in the national Tech Innovation Hub launched by the U.S. Department of State’s Global Engagement Center to defeat foreign based propaganda.

Other resources:

- Trend Micro Report on using Propaganda to Influence Opinion

- Lockheed Martin Cyber Kill Chain: Proactively Detect Persistent Threats

- YouTube By The Numbers

- COVID Tracking Project

Understand the threat

The Cyber Kill Chain model developed by Lockheed Martin explains the steps that an adversary must complete to achieve their objectives. The kill model shows how to recognize disinformation based social engineering attacks designed to manipulate public opinion.

Spreading disinformation

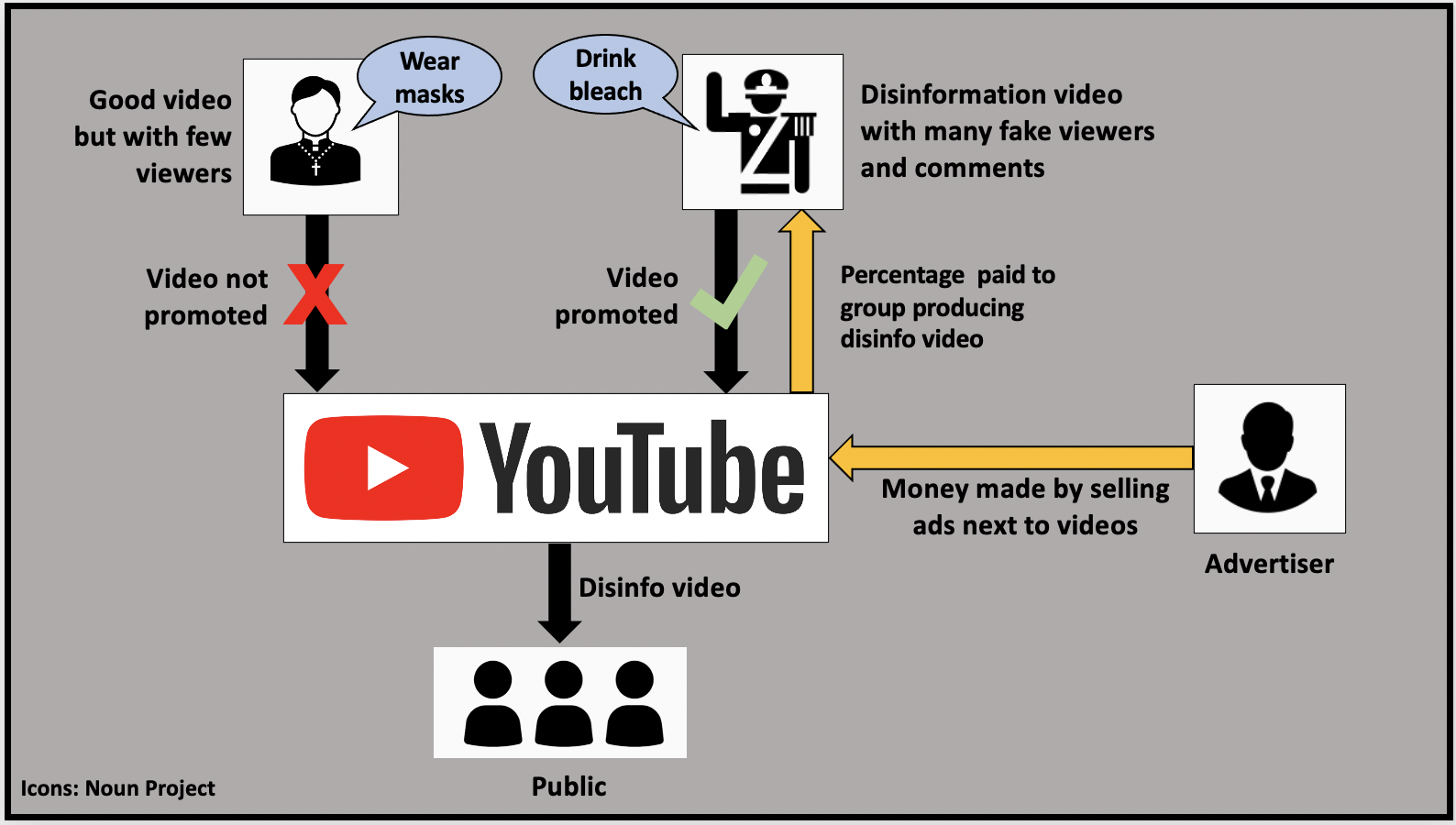

Social media is ideal suited to spread disinformation as content as stories can be planted without the due diligence of professional journalists and then be amplified through bots and paid workers. YouTube spreads disinformation effectively as its algorithm determines what 70% of people watch. YouTube makes money by selling ads so the longer a viewer stays on its platform, the more money it makes. YouTube makes the same amount of money in serving an ads next to disinformation videos as it does serving ads next to videos with truthful content. Disinformation tends to be sensational and plays to people’s biases making them more likely to hook viewers. How big is YouTube?

- YouTube is the 2nd most visited site with 37% of all mobile internet traffic

- 73% of U.S. adults use YouTube whose revenue in 2019 was $15.1 billion

- YouTube video influencers with 500-5k followers charge about $315 per video

- YouTube pays $18 per 1,000 ad views on average and paid over $2 billion to content creation partners

How YouTube picks videos to recommend

YouTube like other social media firms makes money selling ads and collecting information on users that can be sold to marketers. The longer people stay on YouTube, the more ads they can be served. In order to do this, YouTube recommends videos to watch based on the number of times it has already been viewed, the number of likes and comments it has received. Disinformation actors know this and manipulate the YouTube algorithm to recommend their videos by:

- Releasing many videos on a topic in order to to create the impression of a lot of interest about that topic

- Having bots and paid workers ‘view’ and ‘like’ their videos

- Have mobs of commenters add their comments to the videos. YouTube suggests to viewers that, “people who commented on the video you are watching also commented on a bunch of these other videos. So maybe you’d like to see those videos too…”

Disinformation videos can be detected based on the large number of videos being posted by a user (dozens per day), sudden spikes in the number of views, subscribers and comments.

Follow the (blood) money

Blood money comes at the cost of someone else’s life. Beyond political motivations, where is the money in spreading disinformation?

- Firms that sell phantom YouTube subscribers so as boost a video’s ranking

- App vendors who sell apps to create disinformation and deep fakes videos

- YouTube profits from selling ads next to videos filled with disinformation. A percentage of this revenue is paid to the person behind the video which generated viewership.

- Advertisers may be paying YouTube for ads being shown to phantom viewers. What do they get for their advertising fees?

Take Away

Shouting ‘FIRE!’ in a crowded building may land you in court, but some vendors will still sell the shouter a megaphone. The high tech equivalent is a platform like YouTube that profits from distributing disinformation which may harm the public. There has be more regulation in the public interest.

Firms advertising on YouTube should also check that their YouTube ads are being seen by real humans and not just bots.

Deepak

DemLabs

Recent Articles:

- How YouTube spreads COVID Disinformation

- Mobilize poor, rural voters without internet or cell coverage using VOICEBOTS

- How BLUE FUTURE uses textbots to mobilize young voters in Georgia

- Who profits from denying internet access to rural America?

- Zoom performance anxiety? Follow these expert tips.

- Voting in Georgia? Map of early voting locations.

DemLabs is a project of the Advocacy Fund